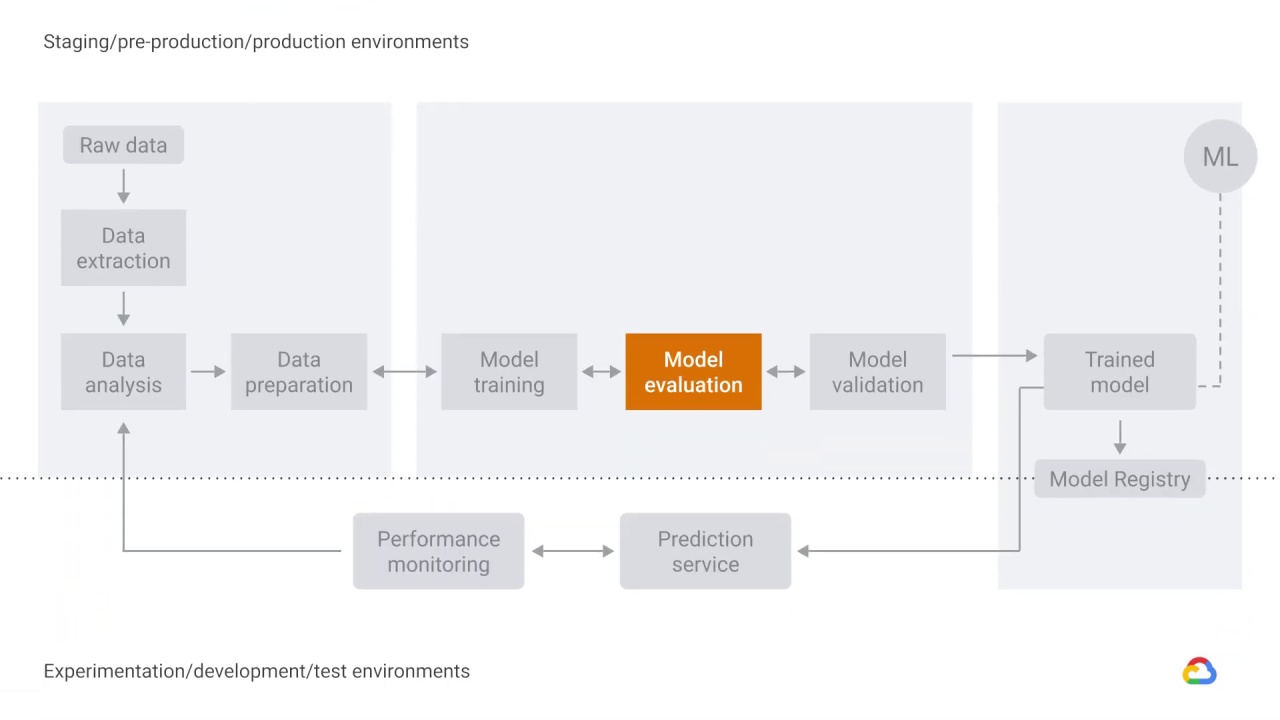

Model training, evaluation, and validation

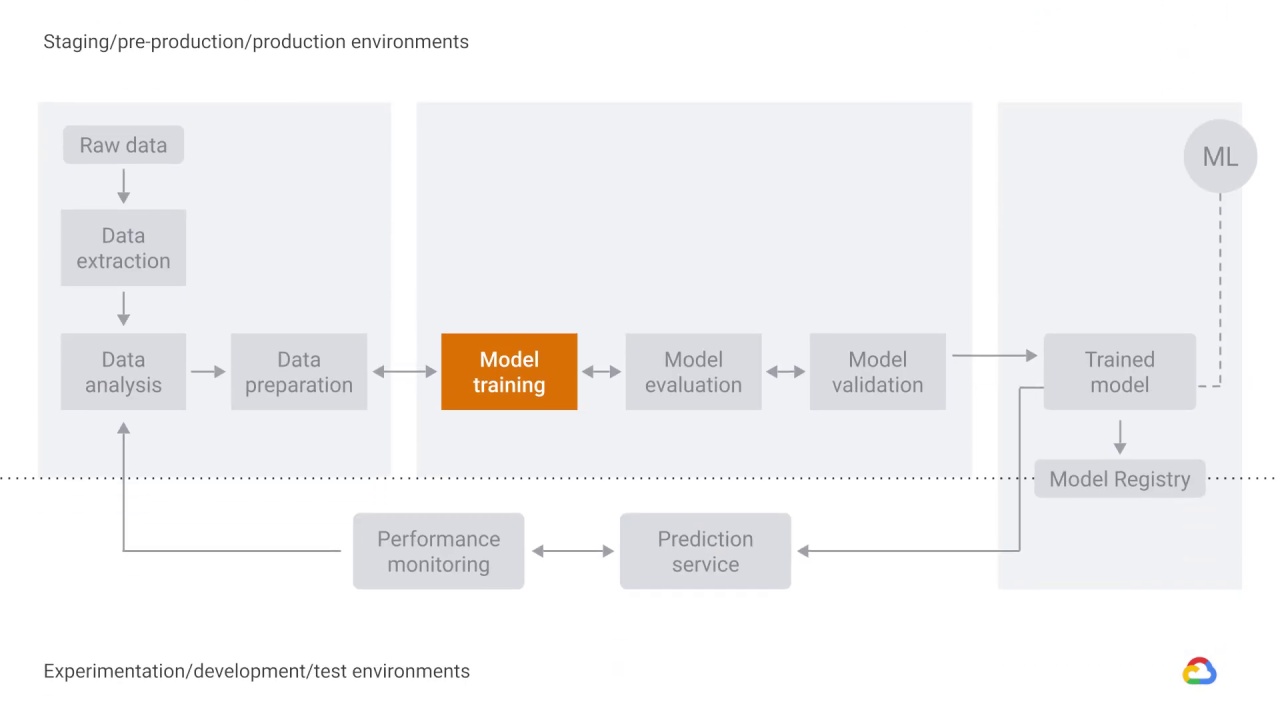

The next step in our workflow is

choosing a model that you’ll train.

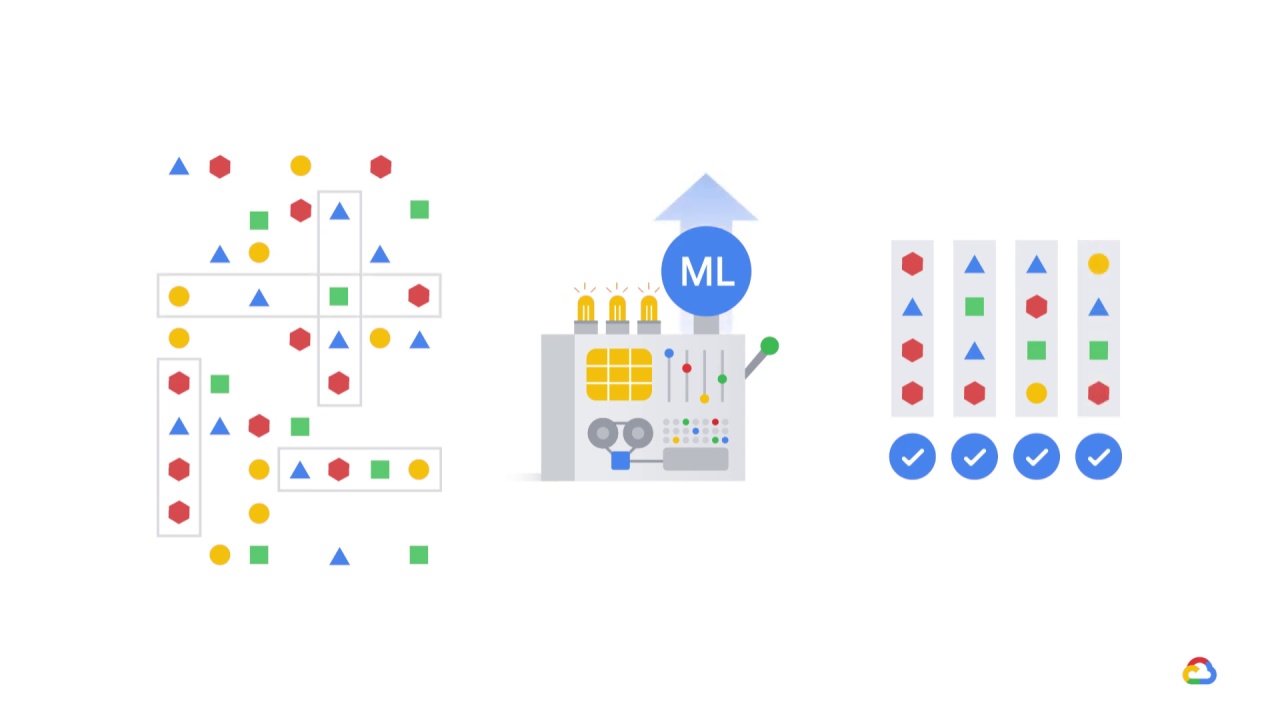

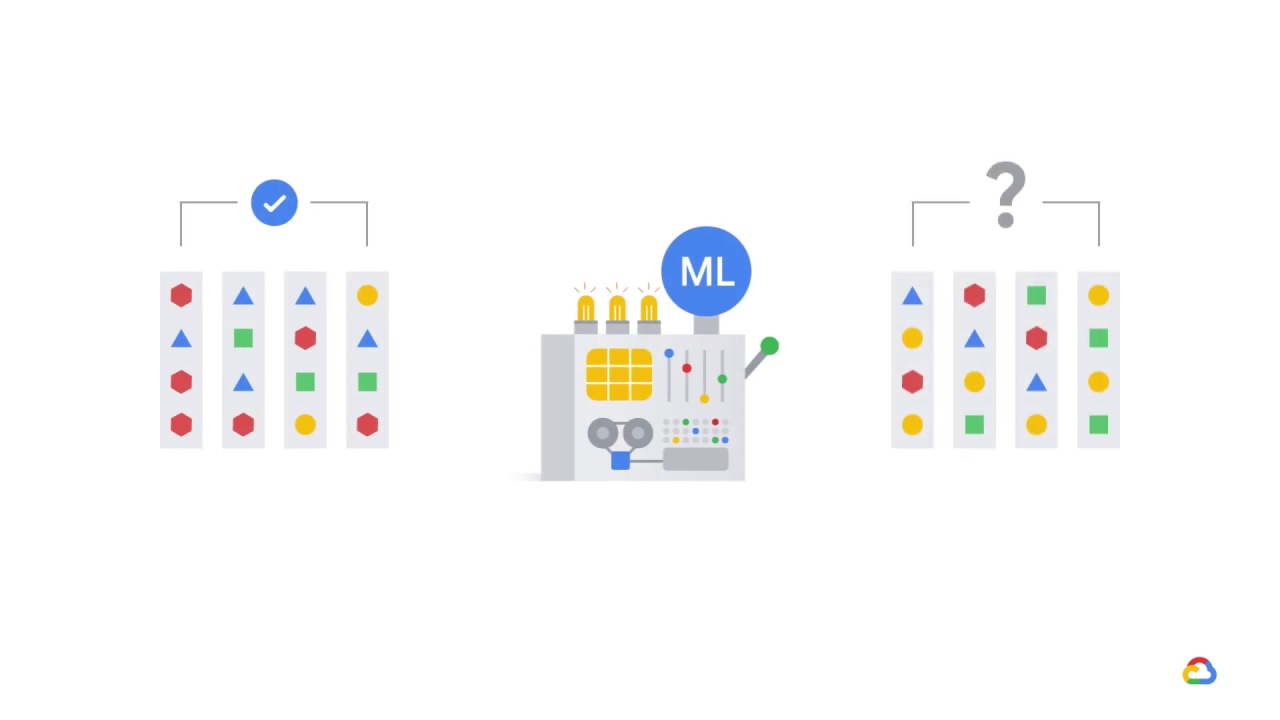

In model training, you implement different machine learning algorithms with the prepared data to train various ML models.

Essentially, model training is the process of

feeding an ML algorithm with data to help identify and learn good values for the feature set.

So, you use your data to incrementally improve your model’s predictive ability.

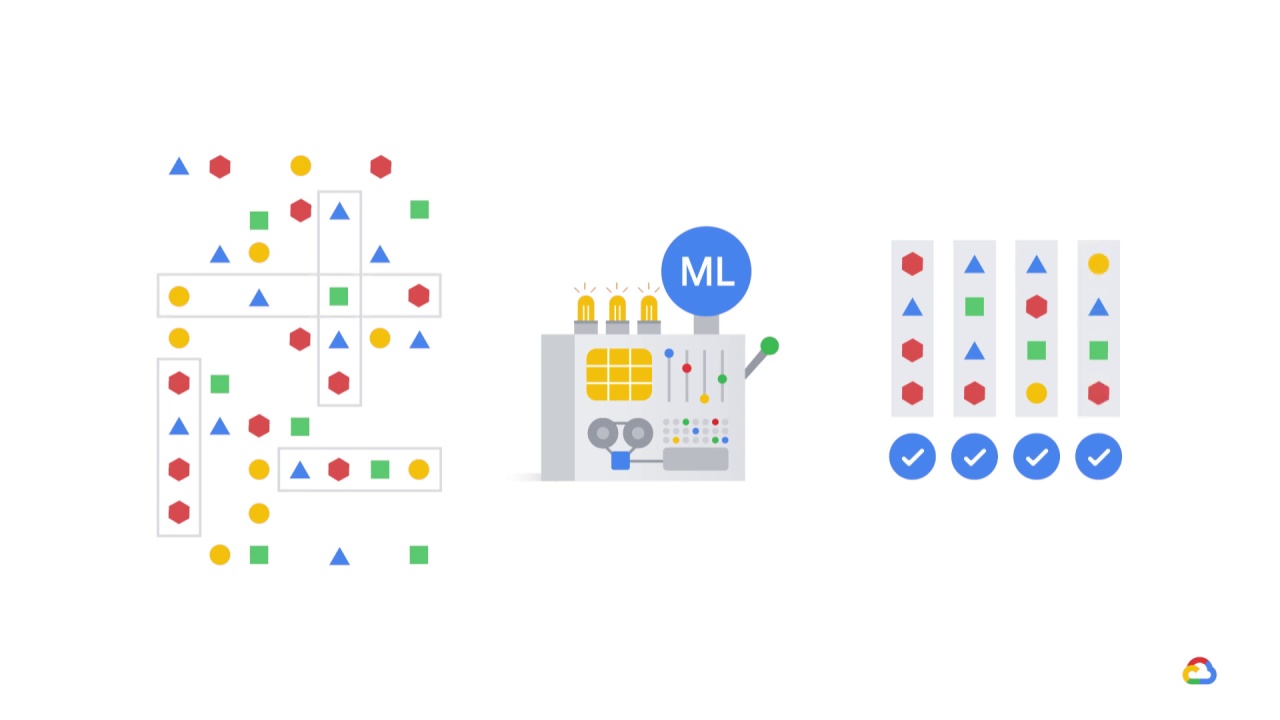

Model evaluation aims to estimate the generalization accuracy of a model on future, unseen, or out-of-sample data.

While training a model is a key step in the pipeline, how the model generalizes on unseen data is an equally important aspect that should be considered in every machine learning pipeline.

This means that we need to know whether the model actually works and,

consequently, if we can trust its predictions.

Could the model be merely memorizing the data it’s fed with,

and therefore unable to make good predictions on future samples, or samples that it hasn’t seen before, such as the test set?

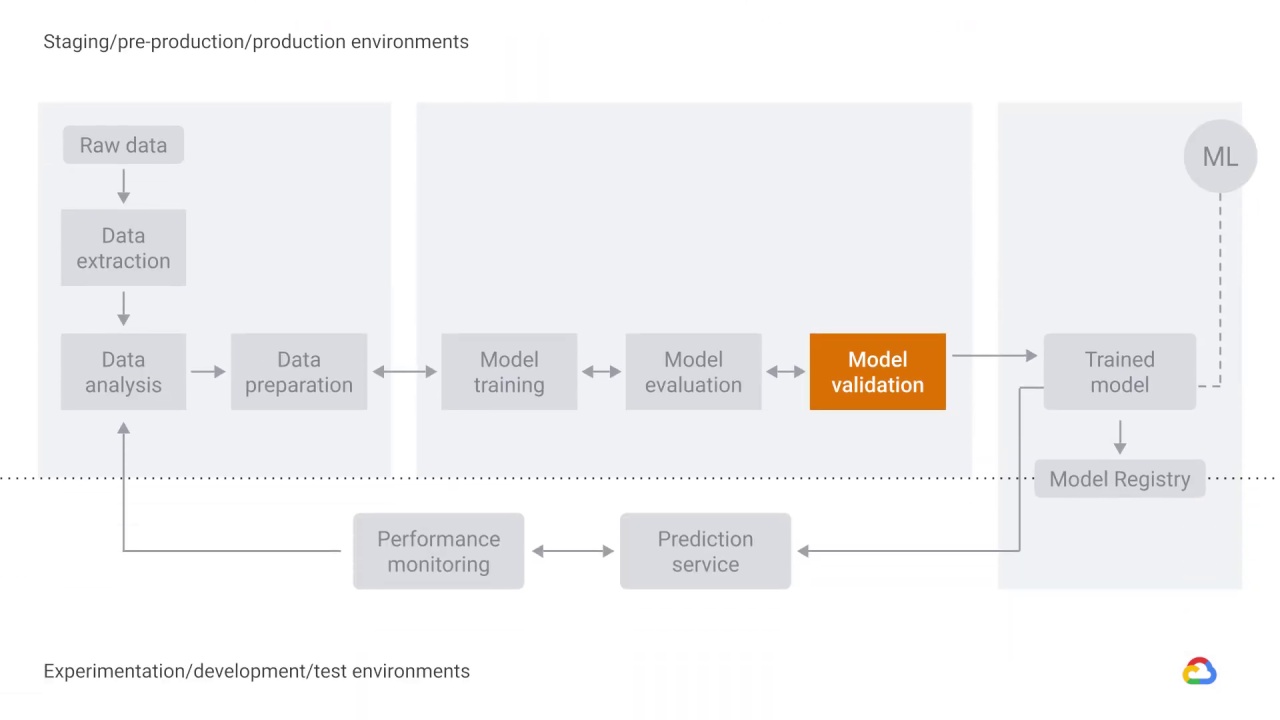

Model evaluation consists of a person or group of people evaluating or assessing the model with respect to some business-relevant metric, like AUC (area under the curve) or cost-weighted error.

If the model meets their criteria, then the model is moved from the assessment phase to development.

For example, in the development phase, you may want to make modifications to hyperparameter values to increase the model’s performance, which correlates to improvements in evaluation results.

After development, the model is then ready for a live experiment or real-world test of the model.

In contrast to the Model Evaluation component, which is performed by humans, the Model Validation component evaluates the model against fixed thresholds and alerts engineers when things go awry.

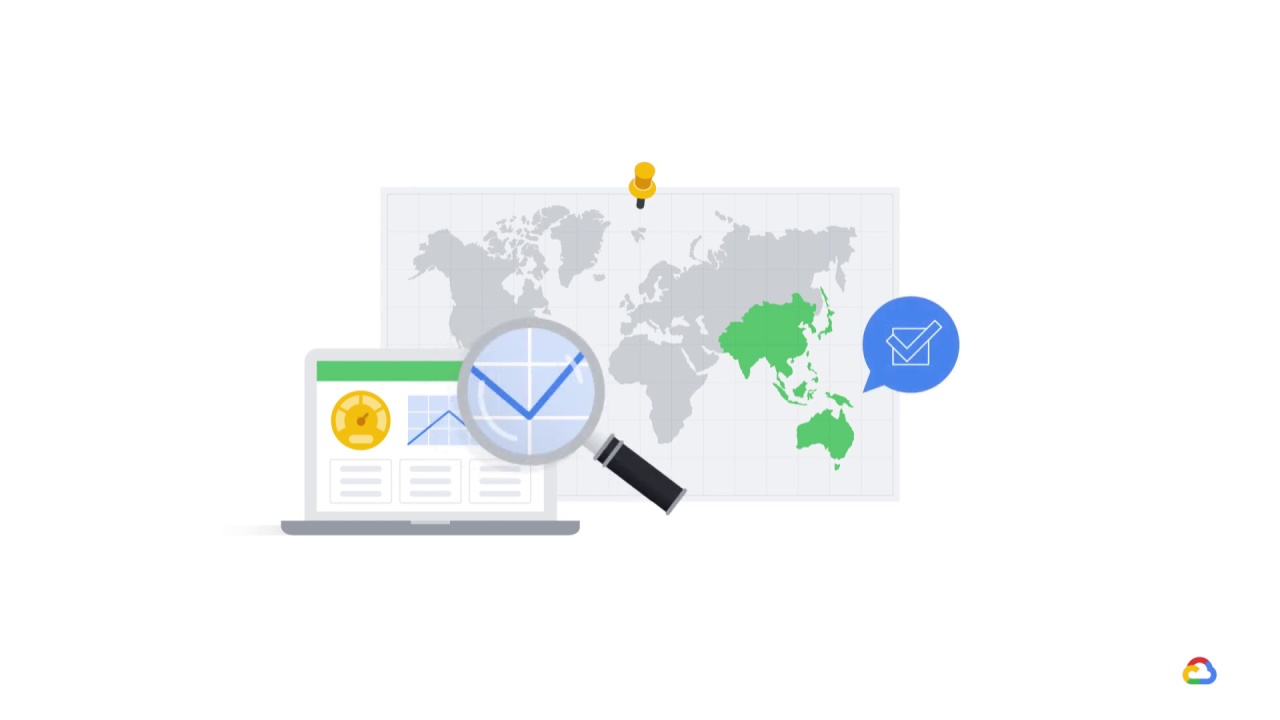

One common test is to look at performance by slice.

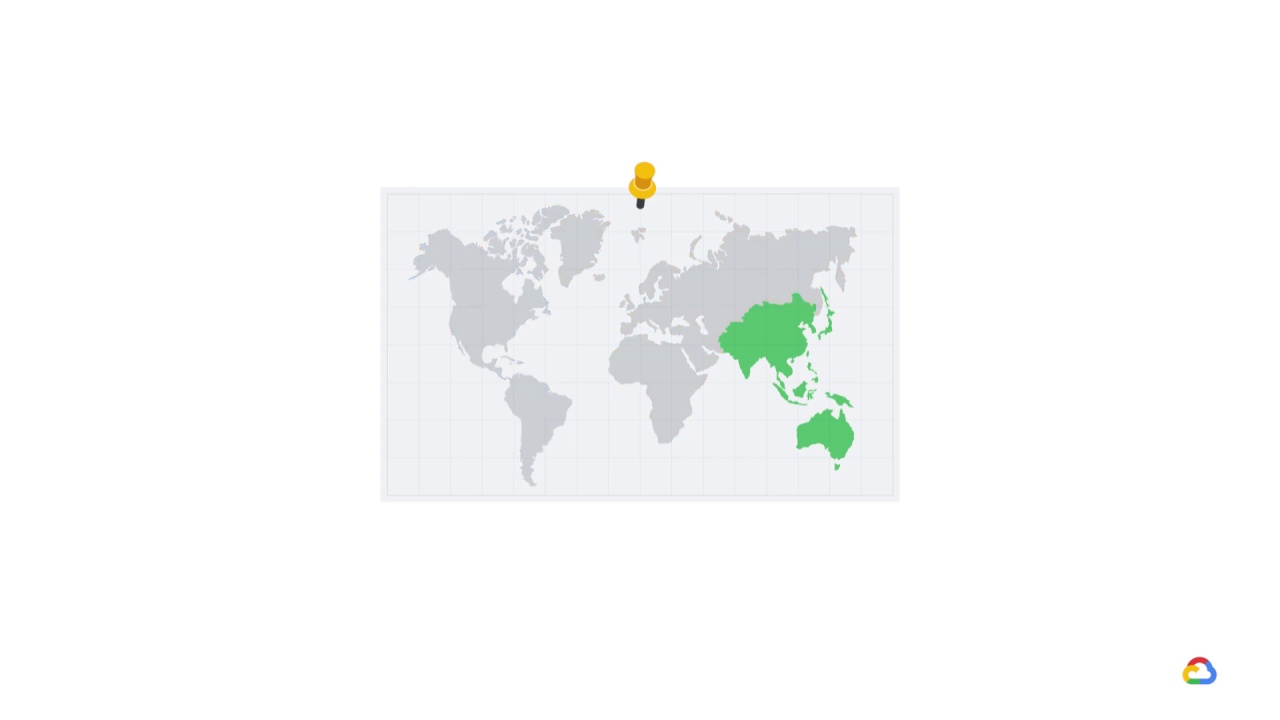

Let’s say, for example, business stakeholders care strongly about

a particular geographic market region.

An alert can be set to notify engineers when the accuracy by country begins to skew downward.

The model evaluation and validation components have one responsibility:

to ensure that the models are ‘good’ before moving them into a production environment.