Optimizing TensorFlow for mobile

Let’s look at a second scenario where hybrid models are necessary.

Earlier we explored how more and more applications are combining machine learning with mobile applications.

Take Google Translate, for example, which is composed of several models.

It uses one model to find a sign, another model to read the sign using optical character recognition, a third model to translate the sign, a fourth model to superimpose the translated text, and possibly even a fifth model to select the best font to use.

ML allows you to add some “intelligence” to your mobile apps, such as

image and voice recognition

translation

and natural language processing.

You can also apply machine learning to gain smarter analytics on mobile-specific data.

For example, to detect certain patterns from motion sensor data, or GPS tracking data.

This is all thanks to the fact that ML can extract meaning from raw data.

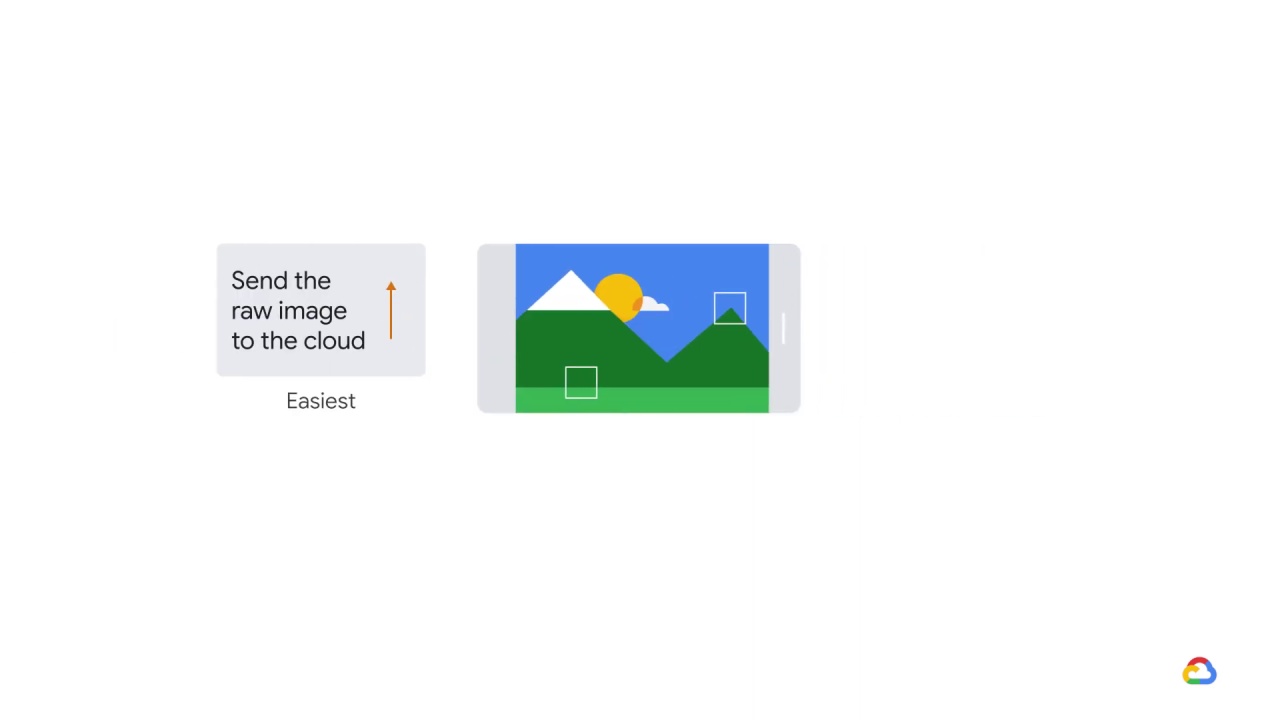

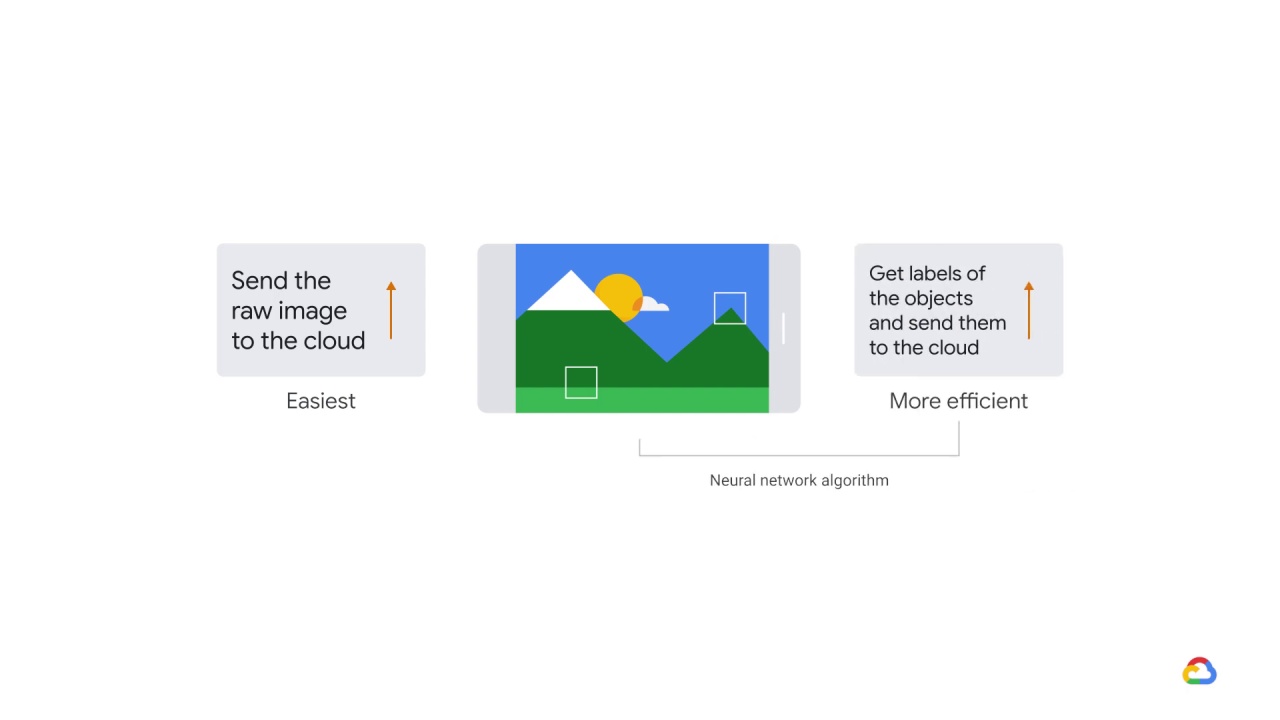

So, if you want to perform image recognition with your mobile app, the easiest way is to send the raw image to the cloud, and let the cloud service recognize the objects in the image.

However

if you have a neural network algorithm running on your mobile app, you can get labels of the objects and send them to the cloud.

It’s a more efficient way to collect the object labels on the cloud service.

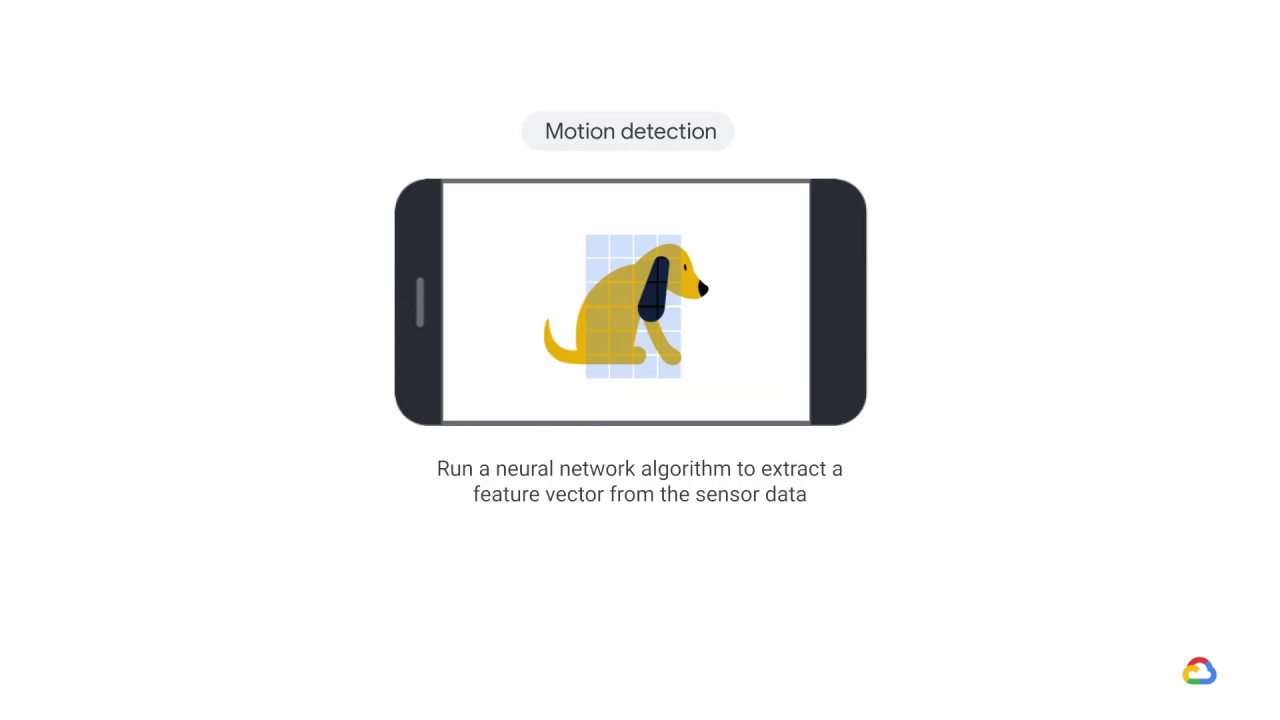

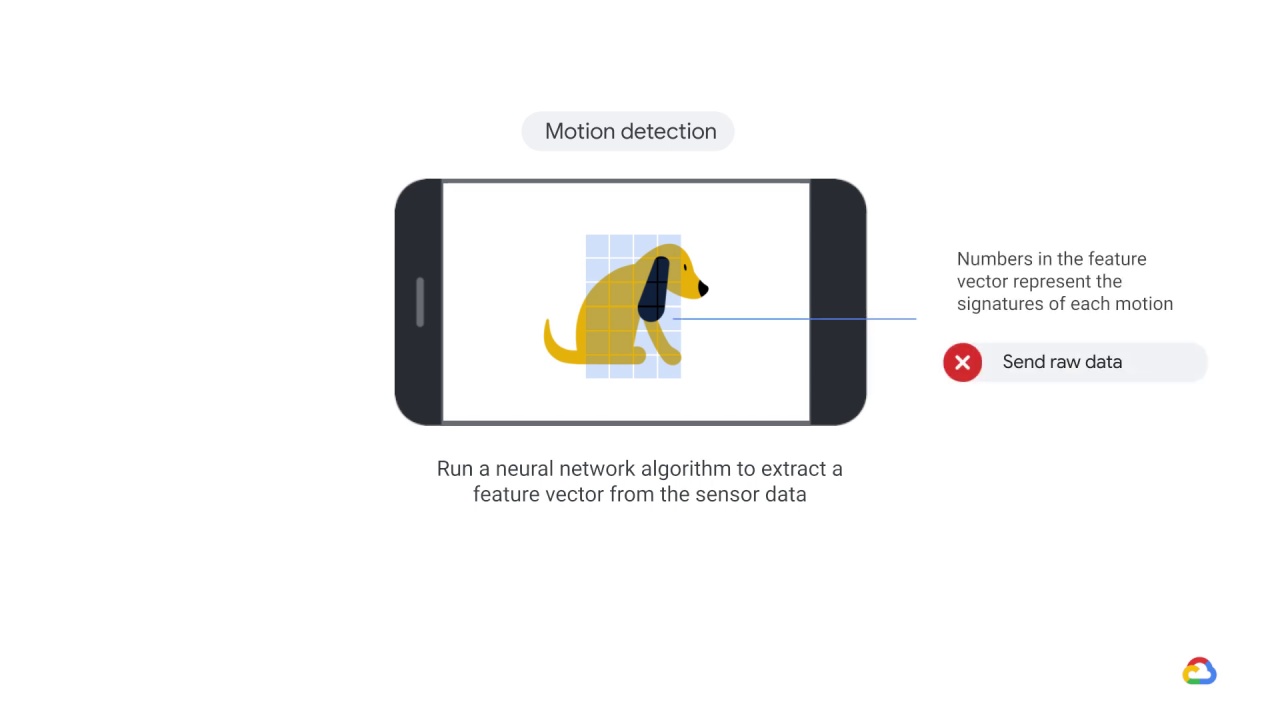

Now let’s say you perform motion detection with your mobile app.

In this case, you can run a neural network algorithm to extract a feature vector from the sensor data.

The numbers in the feature vector represent the “signatures” of each motion.

This means you don’t have to send the raw motion data to a cloud service.

Also, by applying machine learning to mobile apps, you can

reduce network bandwidth

and get faster response times when communicating with cloud services.

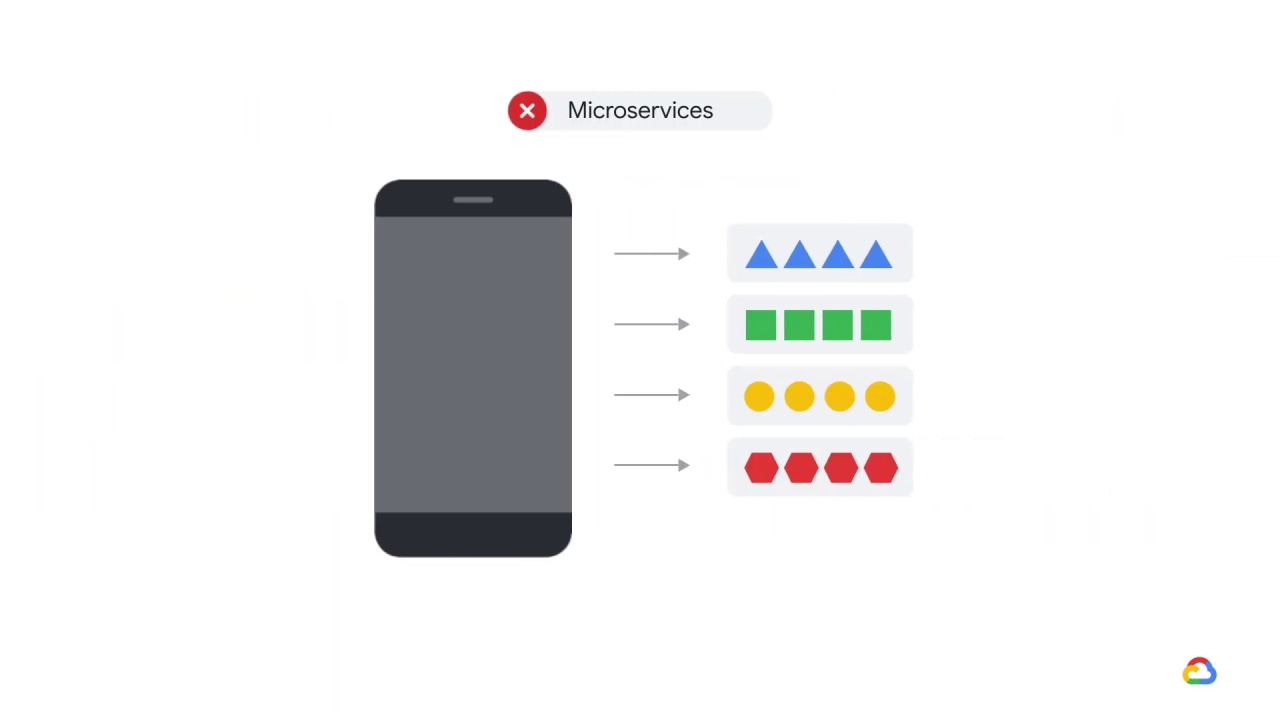

It’s important to note that you often can’t use the microservices approach for mobile devices, as they can add unwanted latency.

Since you can’t delegate to a microservice, like you can when running in the cloud, you’ll now want a library, not a process.

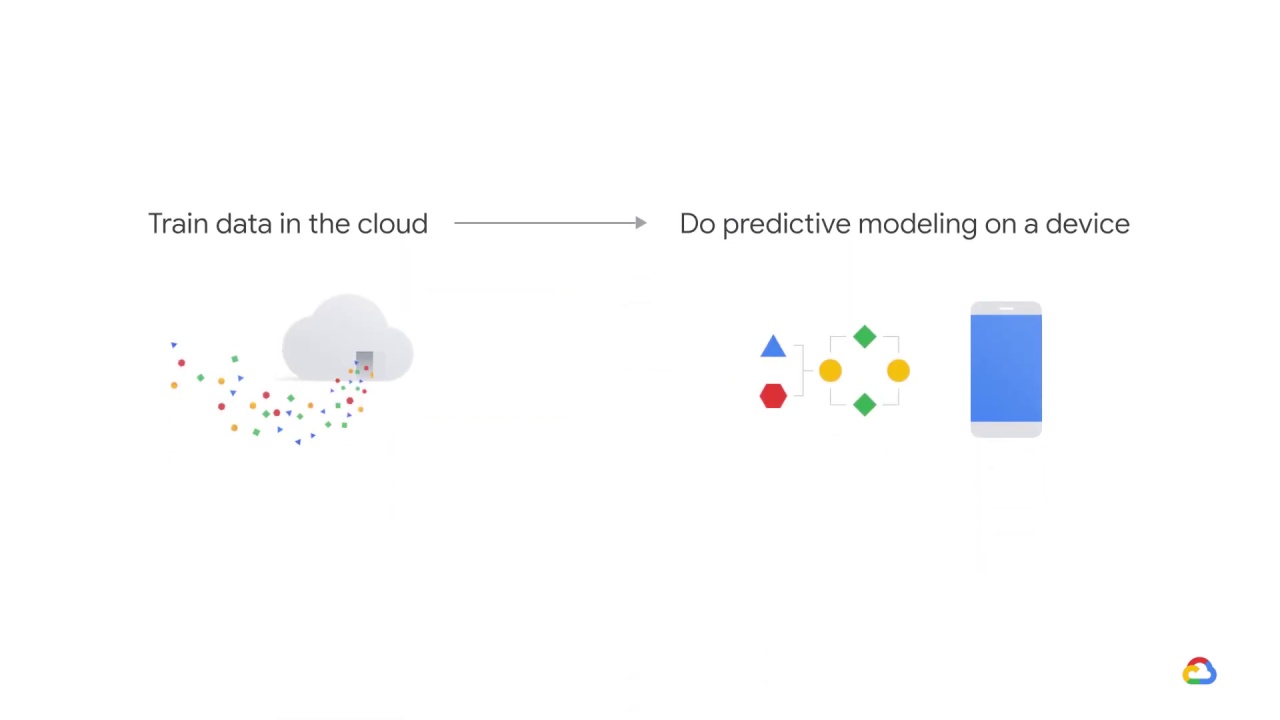

In these types of situations, it’s best to train models in the cloud and do predictive modeling on a device.

This means embedding the model within the device itself.